Diffusion Based Optimal Grasp Detection

Abstract

This project implements a diffusion-based optimal grasp detection system. It takes an RGB-D image as input and segments the point cloud of the object. This segmented point cloud is then fed into a diffusion-based grasp algorithm, which scores potential grasps. The system performs collision-free grasp detection and identifies the optimal grasp, which is then executed in a real-world or simulation environment.

Technical Implementation

Algorithm Pipeline

- Input: RGB-D Image

- Preprocessing: Point cloud segmentation of the object

- Core Algorithm: Diffusion-based grasp generation

- Evaluation: Scoring for collision-free and optimal grasps

- Execution: Real-world or simulation environment

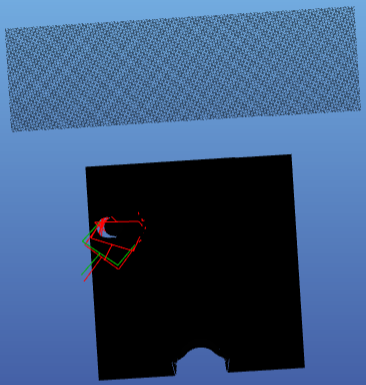

Visualization of the optimal grasp detection process, showing the input RGB-D image, point cloud segmentation, and the scored optimal grasp.

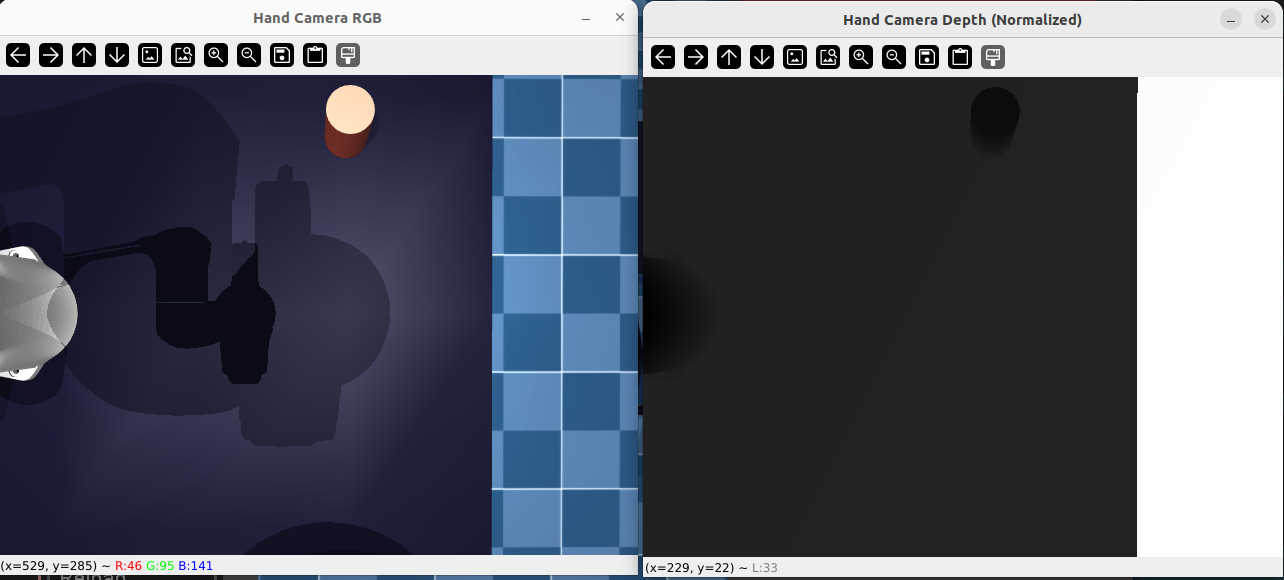

Visualization of the generated grasp poses that are determined to be collision-free within the simulation environment.

Description for the third image goes here.

Key Features

Diffusion Model

Utilizes a diffusion-based approach to generate diverse and high-quality grasp candidates.

Collision Avoidance

Integrated collision detection ensures that generated grasps are feasible and safe for execution.

Optimal Scoring

A sophisticated scoring mechanism to select the most stable and robust grasp from the candidates.

Results and Impact

The system successfully identifies and executes optimal grasps in both simulation and real-world environments, demonstrating robustness and accuracy in handling various objects.

Conclusion

This project demonstrates the effectiveness of diffusion models in generating optimal grasps for robotic manipulation, bridging the gap between perception and action.